Resnet50

ResNet-50, short for Residual Network-50, is a convolutional neural network architecture that was introduced by Microsoft Research in 2015. It is one of the variants of the ResNet family, which is known for its deep and powerful architectures used for image classification and other computer vision tasks.

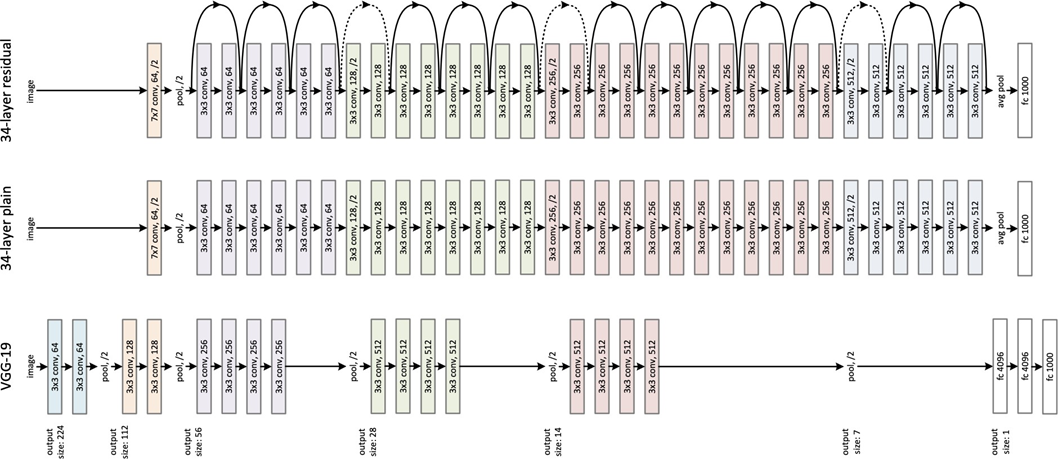

The "50" in ResNet-50 refers to the number of layers in the network, making it a relatively deep neural network. ResNet-50 incorporates residual learning,

which helps to address the problem of degradation in deeper networks. Degradation occurs when adding more layers to a network leads to decreased accuracy due to the vanishing gradient problem. Residual learning introduces skip connections, also known as shortcut connections, to allow information from earlier layers to bypass subsequent layers and be directly transmitted to deeper layers. This way, the network can effectively learn residual mappings and prevent the degradation problem.

ResNet-50 consists of a series of convolutional layers, followed by batch normalization and rectified linear unit (ReLU) activations. The network also includes max pooling layers and fully connected layers at the end for classification. It is pre-trained on a large dataset like ImageNet, which contains millions of labeled images from various categories.

ResNet-50 has been widely used in various computer vision tasks, including image classification, object detection, and image segmentation. Its deep architecture and skip connections make it capable of extracting intricate features from images, leading to improved accuracy compared to shallower networks.

The introduction of ResNet-50 and its subsequent variations has greatly contributed to advancements in computer vision research, enabling breakthroughs in image recognition and understanding.

Architecture:

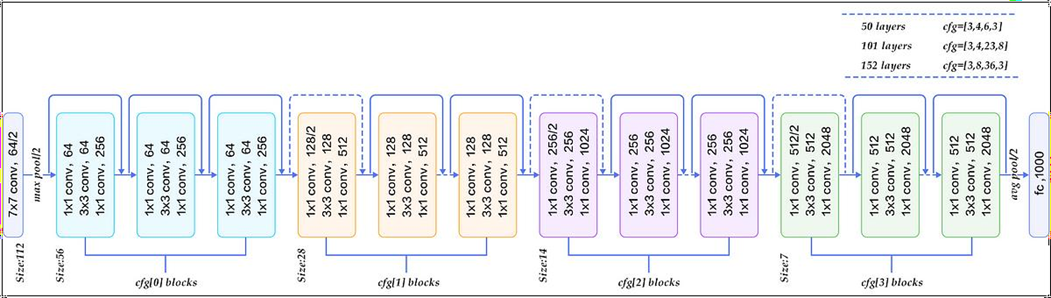

The architecture of ResNet-50 consists of several building blocks known as

residual blocks. Each residual block contains multiple convolutional layers with batch normalization and ReLU activations. The key element in ResNet-50's architecture is the introduction of skip connections or shortcut connections, which allow the network to bypass some layers and retain information from earlier layers.

Here are the main components and rules of ResNet-50's architecture:

1. Convolutional Layers: ResNet-50 starts with a single convolutional layer followed by a max pooling layer. This initial layer extracts low-level features from the input image.

2. Residual Blocks: ResNet-50 consists of 16 residual blocks, organized into four stages. Each stage contains multiple residual blocks with varying numbers of convolutional layers.

3. Convolutional Layers in Residual Blocks: Each residual block typically contains three convolutional layers, where the first two convolutional layers have a smaller filter size (e.g., 1x1 and 3x3) and the last convolutional layer has the same filter size as the input to the block. These convolutional layers learn the residual mapping.

4. Skip Connections: The skip connections in ResNet-50 allow the network to shortcut or bypass some convolutional layers. The skip connections take the output from an earlier layer and directly feed it to a later layer, typically through element-wise addition or concatenation.

5. Identity Mapping: To facilitate the skip connections, ResNet-50 uses the concept of identity mapping. In each residual block, the shortcut connection directly passes the input from an earlier layer to the later layers without any transformations. This helps to preserve important information and gradients.

6. Downsampling: To reduce the spatial dimensions of feature maps, ResNet-50 employs max pooling layers after every few residual blocks. Max pooling reduces the width and height of feature maps while preserving important features.

7. Fully Connected Layers: At the end of ResNet-50, there are fully connected layers followed by a softmax activation. These layers perform the classification based on the learned features from earlier layers.

The skip connections in ResNet-50 enable the network to overcome the problem of vanishing gradients by allowing the gradients to flow directly from later layers to earlier layers. This facilitates the training of deeper networks and helps in capturing more complex and abstract features. The residual connections also provide a shortcut for the network to learn residual mappings, making it easier for the network to optimize and converge.

By incorporating skip connections and residual learning, ResNet-50 has achieved impressive results in various computer vision tasks and has become a widely used architecture in the field of deep learning. The dotes in the image represent 1*1 convolution to make sizes same.

Here is a simplified table representing the architecture of ResNet-50:

|

Stage |

Output Size |

Number of

Residual Blocks |

|

Stage 1 |

112x112 |

1 |

|

Stage 2 |

56x56 |

3 |

|

Stage 3 |

28x28 |

4 |

|

Stage 4 |

14x14 |

6 |

|

Stage 5 |

7x7 |

3 |

Note: The input size is typically 224x224 pixels.

Each residual block within a stage consists of multiple convolutional layers and skip connections. The number of convolutional layers may vary within the residual blocks.

The total number of layers in ResNet-50, including convolutional, batch normalization, fully connected, and softmax layers, is approximately 50.

This table provides a high-level overview of the architecture of ResNet-50, showcasing the stages and the number of residual blocks within each stage.

Advantages of resent 50:

ResNet-50 has several advantages and powerful features that contribute to its effectiveness in various computer vision tasks. Here are some of its key advantages:

1. Deep Architecture: ResNet-50 is a deep neural network with 50 layers, allowing it to capture and learn complex features from images. The depth of the network enables it to model intricate patterns and representations, leading to improved accuracy in image classification and other computer vision tasks.

2. Residual Learning and Skip Connections: The introduction of skip connections in ResNet-50 addresses the problem of vanishing gradients in deeper networks. By allowing the gradients to flow directly from later layers to earlier layers, ResNet-50 enables easier optimization and training of deep models. The residual learning framework helps the network learn residual mappings, making it more efficient at capturing and learning the differences or residuals between input and output.

3. Improved Accuracy: ResNet-50 has achieved state-of-the-art performance in various image recognition challenges, such as the ImageNet Large Scale Visual Recognition Challenge (ILSVRC). Its deep architecture, combined with skip connections and residual learning, allows it to extract rich and discriminative features from images, leading to higher accuracy in image classification, object detection, and other computer vision tasks.

4. Transfer Learning: ResNet-50 is often pre-trained on large-scale datasets like ImageNet, which contains millions of labelled images. The pre-training allows the network to learn general image representations, which can be transferred and fine-tuned for specific tasks with smaller datasets. This transfer learning capability of ResNet-50 reduces the need for training from scratch and enables faster convergence and improved performance on new tasks.

5. Versatility: ResNet-50's architecture and learned features can be utilized for a wide range of computer vision tasks, including image classification, object detection, image segmentation, and more. Its versatility makes it a popular choice among researchers and practitioners for various real- world applications.

6. Availability of Pre-trained Models: ResNet-50, along with other variants of the ResNet family, is widely available as pre-trained models. These pre-trained models can be easily used and fine-tuned for specific tasks, saving significant time and computational resources.

The combination of its deep architecture, skip connections, and residual learning has made ResNet-50 a powerful and widely adopted neural network architecture in the field of computer vision. Its advantages in accuracy, transfer learning, and versatility have led to numerous breakthroughs in image recognition and understanding.

Reference:

- https://datagen.tech/guides/computer-vision/resnet-50/#:~:text=The%2050%2Dlayer%20ResNet%20architecture,with%201%C3%971%2C256%20kernels.

- YouTube link - https://www.youtube.com/watch?v=Uuc1wdqMFtQ

Do Checkout :

To know more about such interesting topics, visit this link.

Do visit our website to know more about our product.

By

CharanSai